Back then the results were as follows (from most accurate to least): Microsoft and Amazon (close 2nd), then Voicegain and Google Enhanced, and then, far behind, IBM Watson and Google Standard. For the Deepgram parameters which can contain multiple items (like keywords), when multiple values are required, replace the string value with an array of string values like below and the multiple values will be accounted for.It has been over 7 months since we published our last speech recognition accuracy benchmark. The parameters are passed along as key-value pairs in a JSON object. These additional features are configured on VoiceAI Connect using the sttGenericData field. Review Deepgram’s features documentation for a full list. This is configured on VoiceAI Connect using the language parameter.ĭeepgram supports a number of advanced features such as different models, keyword boosting, profanity filter, and more. To define the language, provide the appropriate BCP-47 language code from Deepgram’s documentation. This is configured on VoiceAI Connect using the sttUrl parameter. Note: When configuring the providers, make sure that you define the API key as a "key" and not as a "token".ĭeepgram STT URL: wss:///audiocodes/stt. The language value is configured on VoiceAI Connect using the language parameter under the bots section. VoiceAI Connect is configured to connect to Amazon Transcribe, by setting the type parameter to aws under the providers section.įor languages supported by Amazon Transcribe, click here.

To connect to Amazon Transcribe speech-to-text service, you need to provide AudioCodes with the following:Īn account with admin permissions to use the transcribe feature (see here).

For example, for English (USA), the parameter should be configured to eng-USA. This value (ISO 639-3 format) is configured on VoiceAI Connect using the language parameter under the bots section. 4.5) WebSocket-based API: To define the language, you need to provide AudioCodes with the language code from Nuance. For example, for English (USA), the parameter should be configured to en-US. This value (ISO 639-1 format) is configured on VoiceAI Connect using the language parameter. Nuance Vocalizer: To define the language, you need to provide AudioCodes with the following from Nuance's Vocalizer Language Availability table: Nuance Mix is supported only by VoiceAI Connect Enterprise Version 2.6 and later. To configure OAuth 2.0, use the following providers parameters: oauthTokenUrl, credentials > oauthClientId, and credentials > oauthClientSecret. VoiceAI Connect authenticates itself with Nuance Mix (which is located in the public cloud), using OAuth 2.0. VoiceAI Connect supports Nuance Mix, Nuance Conversational AI services (gRPC) API interfaces. The on-premise server is without authentication while the cloud service uses OAuth 2.0 authentication (see below). Note: Nuance offers a cloud service (Nuance Mix) as well as an option to install an on-premise server. This URL (with port number) is configured on the VoiceAI Connect using the sttHost parameter. You need to provide AudioCodes with the URL of your Nuance's speech-to-text endpoint instance. VoiceAI Connect is configured to connect to the specific Nuance API type, by setting the type parameter in the providers section, to nuance or nuance-grpc. To connect to Nuance Mix, it must use the gRPC API. To connect VoiceAI Connect to this Nuance Krypton speech service, it can use the WebSocket API or the open source Remote Procedure Calls ( gRPC) API. For example, for English (South Africa), the parameter should be configured to en-ZA. This value is configured on VoiceAI Connect using the language parameter. To define the language, you need to provide AudioCodes with the following from Google's Cloud Speech-to-Text table: To connect to Google Cloud Speech-to-Text service, see Google Dialogflow ES bot framework for required information. If you use this service, you need to provide AudioCodes with the custom endpoint details. For more information, see Azure's documentation at. VoiceAI Connect can also use Azure's Custom Speech service.

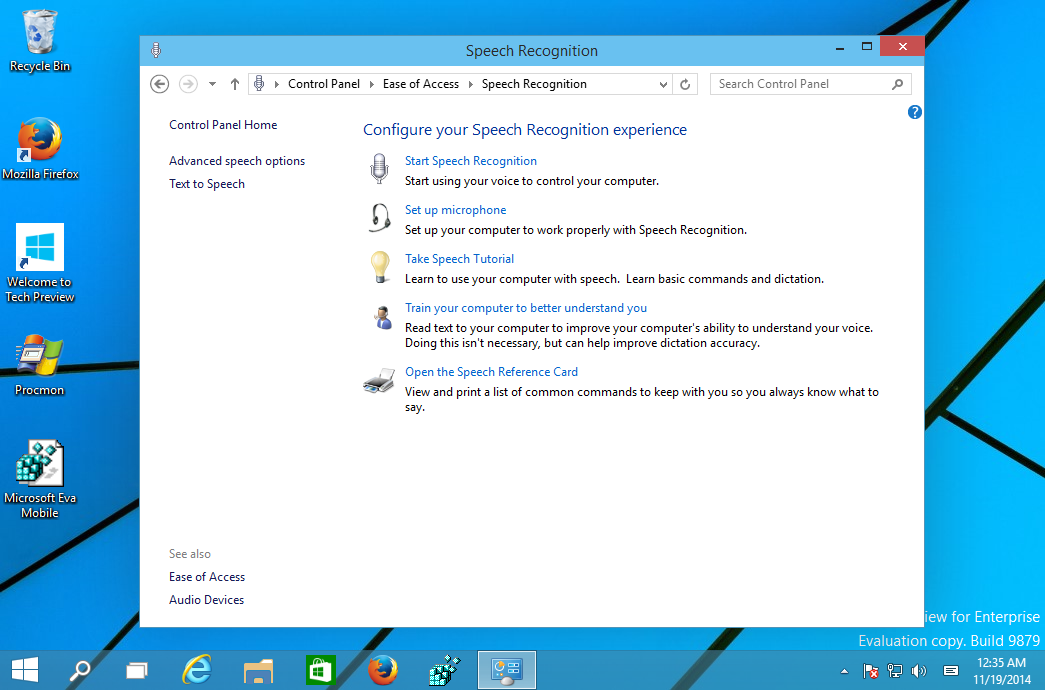

For example, for Italian, the parameter should be configured to it-IT. To define the language, you need to provide AudioCodes with the following from Azure's Speech-to-text table: The region is configured using the region parameter. Note: The key is only valid for a specific region. The key is configured on VoiceAI Connect using the credentials > key parameter in the providers section. To obtain the key, see Azure's documentation at. To connect to Azure's Speech Service, you need to provide AudioCodes with your subscription key for the service. To connect VoiceAI Connect to a speech-to-text service provider, certain information is required from the provider, which is then used in the VoiceAI Connect configuration for the bot.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed